|

I am a second-year Ph.D. candidate supervised by Jaesik Park at the Visual & Geometric Intelligence Lab in SNU. Before starting my Ph.D., I obtained a mastser's degree from Graduate School of Mobility at KAIST, where I was a memeber of AXE Lab. Before that, I earned a bachelor's degree in Automotive Engineering from the KMU. Email / CV / Google Scholar / Github / Linkedin |

|

|

|

|

I am interested in 3D computer vision, especially 3D perception and scene understanding for robot vision. My research focuses on multi-modal sensor fusion, including camera, LiDAR, and radar, to enhance the perception capabilities of autonomous systems. I am also passionate about leveraging visual foundation model for 3D perception. |

|

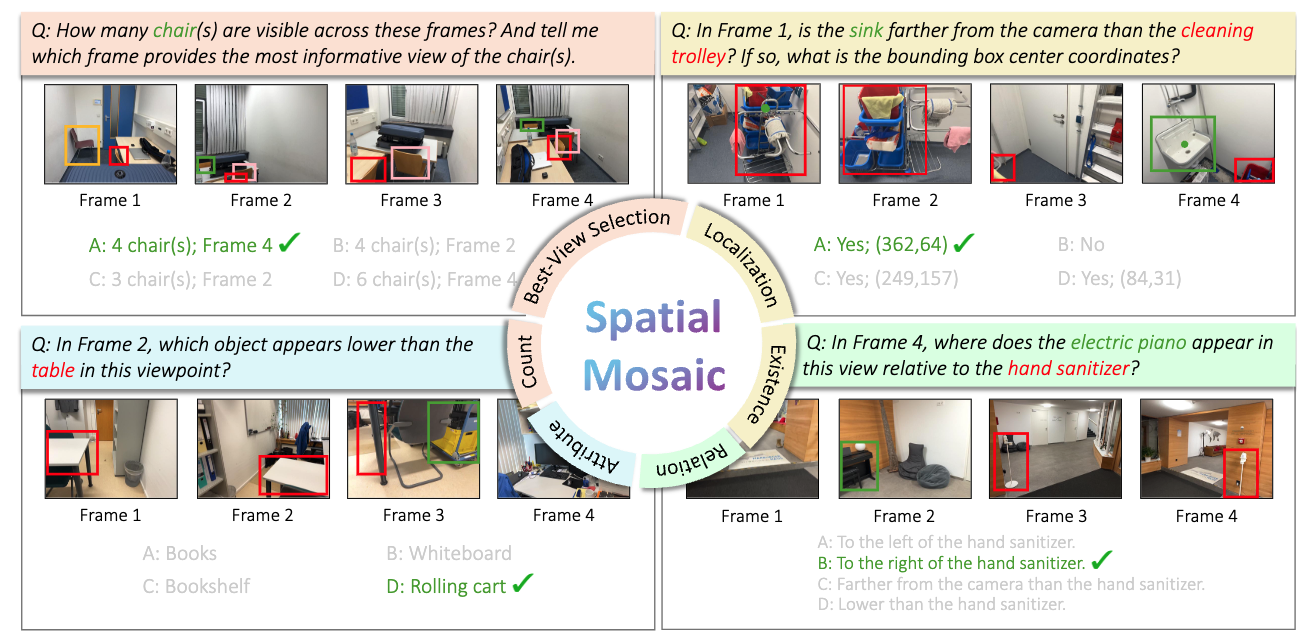

Kanghee Lee, In-Jae Lee, Minseok Kwak, Kwonyoung Ryu, Jungi Hong and Jaesik Park Under Review paper |

|

In-Jae Lee, Moongyeom Kim, Kwonyoung Ryu, Pierre Musacchio and Jaesik Park NeurIPS 2025 (Spotlight) paper | video | project page |

|

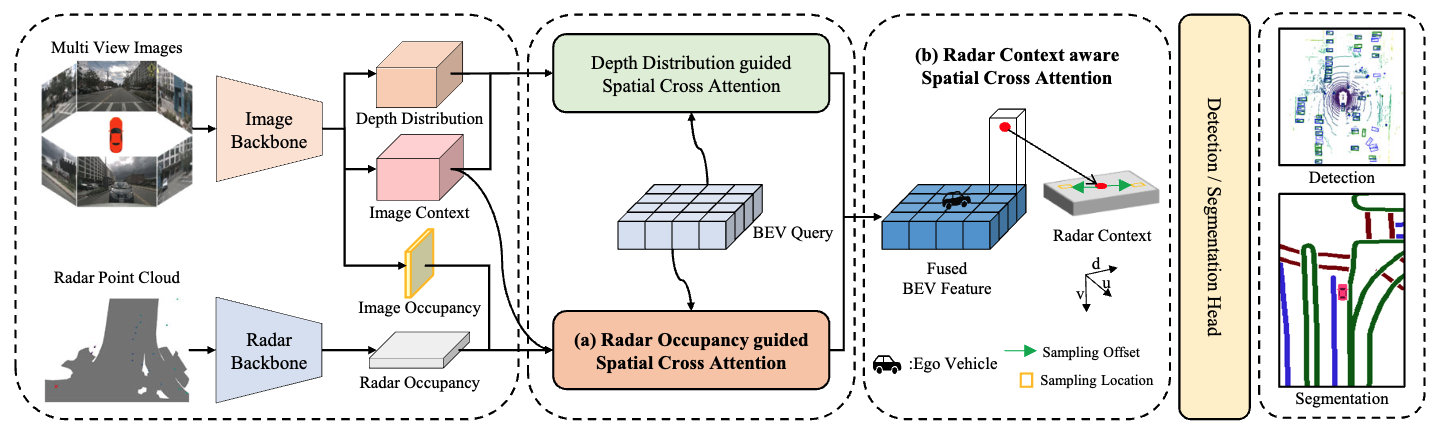

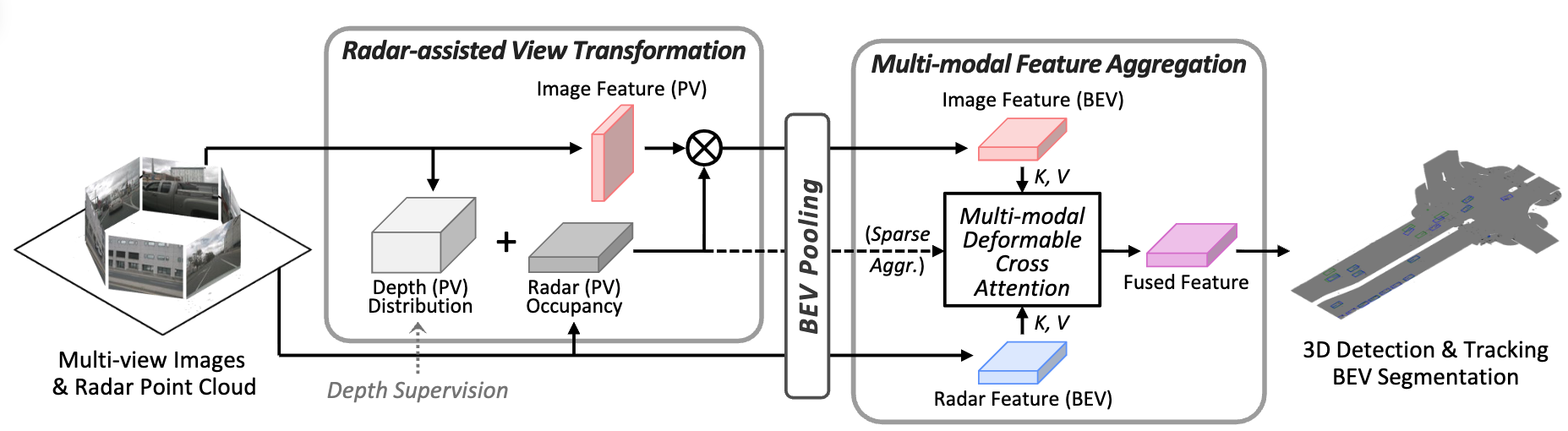

In-Jae Lee, Sihwan Hwang, Youngseok Kim, Wonjune Kim, Sanmin Kim and Dongsuk Kum ICRA 2025 paper |

|

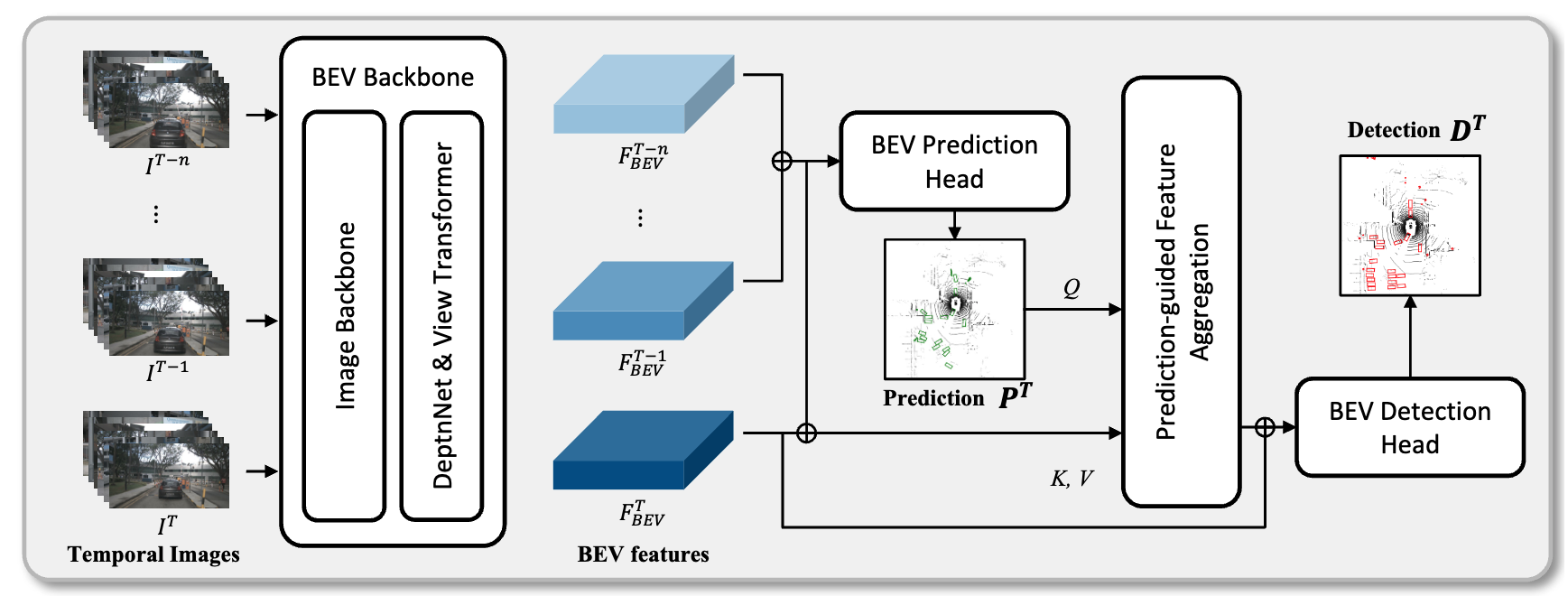

Sanmin Kim, Youngseok Kim, In-Jae Lee and Dongsuk Kum ICCV 2023 paper | code |

|

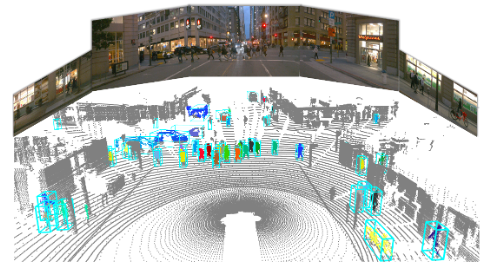

Youngseok Kim, Juyeb shin, Sanmin Kim, In-Jae Lee, Jun Won Choi and Dongsuk Kum ICCV 2023 paper | video | code |

|

This website's source code is from Jon Barron. |